This article is more than 1 year old

Pure uncloaks rack-scale FlashBlade object filer for unstructured data crowd

Hardware rack 'em and file/object software stack 'em

FlashBlade is Pure Storage’s rack-scale flash system for multi-protocol access to unstructured data and the first all-flash object-based unstructured data storage system.

Although its rack-scale is differentiated from EMC’s DSSD rack-scale flash system, which is primarily for latency-sensitive structured data.

It is a companion product line to the existing FlashArray//m, which is for structured data and is in the same general product class as EMC’s XtremIO arrays.

The FlashBlade design involves 15 side-by-side blades in a 4U box, and the boxes can scale out to multi-petabyte levels of capacity.

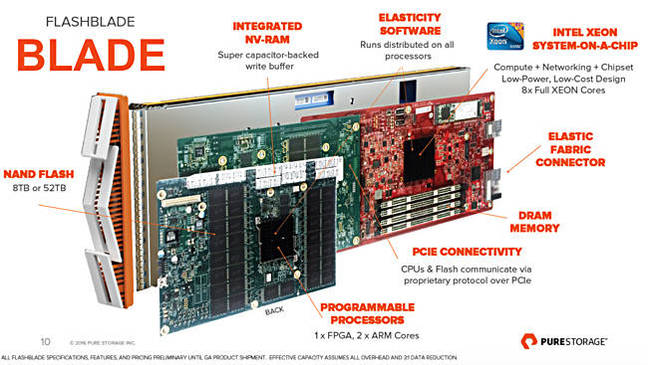

A blade, effectively a complete object node server on a carrier, has three cards affixed to it:

- Compute – 8-core Xeon system-on-a-chip – and Elastic Fabric Connector for external, off-blade, 40GbitE networking,

- Storage – NAND storage with 8TB or 52TB raw capacity of raw capacity and on-board NV-RAM with a super-capacitor-backed write buffer plus a pair of ARM CPU cores and an FPGA,

- On-blade networking – PCIe card to link compute and storage cards via a proprietary protocol.

The type of NAND is not revealed. We note that FlashArray//m systems use Samsung 3D V-NAND TLC (3bits/cell) flash and it wouldn’t be surprising if FlashBlade used it too.

Like Violin Memory VIMMS and EMC’s DSSD product, Pure is not using SSDs, having designed its own flash modules instead, and integrated them with compute and networking on a single carrier.

The blades run Elasticity software, which is distributed across the Xeon and ARM CPUs. FlashBlades are linked by Elastic Fabric Connectors and form a cluster. A FlashBlade enclosure can have up to 1.6PB of effective capacity*, assuming 3:1 data reduction, and 15GB/sec of bandwidth. There will be 120 CPU cores in total.

Frontal view of FlashBlade enclosure

A full rack could house 10 FlashBlade enclosures and offer 16PB of effective capacity and 150GB/sec bandwidth and 1,200 CPU cores. Pure has not indicated any FlashBlade cluster size limits.

Client system access is via Ethernet.

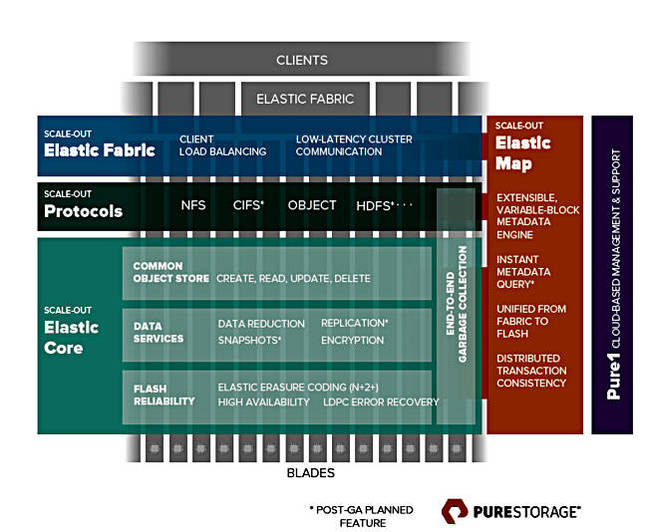

Elasticity software

This provides a common, scale-out object store accessed by file – NFS and CIFS*** – and object protocols, and with future protocol adaptability, meaning HDFS is coming. Maybe S3 and other access protocols as well.

FlashBlade software stack.

The Elasticity software stack features:

- Scale-out Elastic Core with end-to-end garbage collection

-

- N+2+ erasure coding

- LDPC error recovery

- High-availability

- Data services:

-

- Data reduction

- Snapshots

- Replication

- Encryption

- Object store:

-

- Create, read, update and delete functions

File and object access protocols are layered on this core.

The Elastic Fabric provides client load-balancing with low-latency cluster communications.

An Elastic Map provides metadata functions:

- Extensible and variable block size metadata engine

- Metadata query

- Unified across flash stores in the fabric

- Distributed transaction consistency

Elasticity has a namespace and metadata addressing capability that can cope with “creating over 100 million unique objects/files every second for 20 years.” That would roughly be 100,000,000,628,992,000 objects; 100 quadrillion, give or take the odd billion or two.

System upgrades to support a larger address space are non-disruptive.

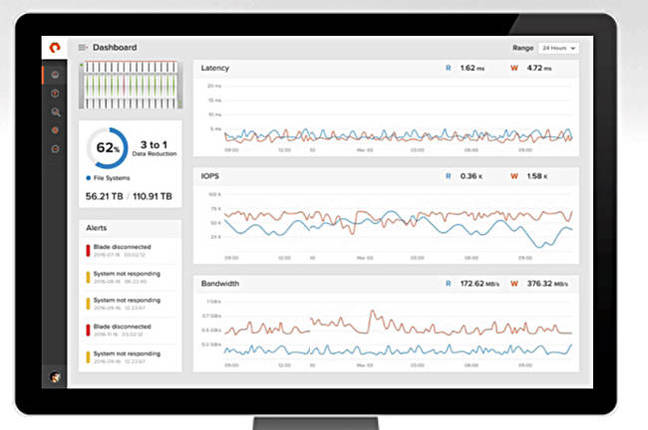

Management

Management is by the cloud-based Pure1 facility. There is a web-based GUI and a REST API. Pure says “anyone can manage FlashBlade – at any scale.”

FlashBlade GUI screenshot.

FlashBlade in the market

The use cases FlashBlade is designed for involve potentially massive unstructured data sets originating from the Internet of Things, log data and machine data as well as cloud-native web scale applications. We should think of large scale simulations, such as traditional weather forecasting and airflow analysis, security threat analysis, and Big Data analytics.

Pure says FlashBlade grows capacity, IO and metadata performance, bandwidth, and client connectivity linearly as blades are added non-disruptively. It claims FlashBlade provides consistently low latency that, we think, should obviously be far faster than disk-based object storage systems, such as those from IBM/Cleversafe, Scality, etc, and, probably, faster file access than scale-out filers, such as Isilon’s. Comparative performance numbers have not been seen.

It will be more space-efficient than disk-based systems, Pure says, claiming it replaces “racks of storage with its 4U form-factor.”

Rear view of FlashBlade enclosure

A picture of the FlashBlade enclosure’s rear end shows four power sockets and two pairs of connectors with what look like two Ethernet ports.

It’s power needs are rated as 1,300watts/PB. And, Pure says, it is affordable because its cost is said to be less than $1 per GB of usable capacity.

Overview and availability

No other supplier has an all-flash, scale-out, file and object storage system, a combined NAS and object super-charger. **

A chip designer involved in an early access program said FlashBlade provided 50 per cent more throughput and shortened simulation time by more than 20 per cent compared to a hybrid flash/disk filer. An automotive company had a fourfold increase in simulation speed and was able to run three times as many concurrent simulations.

With this announcement, Pure has opened up a second all-flash array product line and extended its total addressable market into the scale-out file and object storage area, meaning large-scale simulation, genome processing, and general analytics. Think of areas such as oil and gas, life sciences, mechanical and electrical engineering, chip design, data-intensive entertainment and media processing, energy, financial services, utilities, and the like.

Depending upon the pricing and multi-tenancy features, FlashBlade could also be of interest to cloud service providers.

Pure says that, with FlashBlade for file and object unstructured data access and its existing FlashArray for structured block data access and running virtual machines, customers have a complete flash-based platform pair for on-premises (private cloud) storage needs.

It is available through an Early Access Program for select customers. A directed availability release for production workloads is planned for the second half of the year, quite a long time ahead.

We suppose general availability will occur in late 2016 or 2017. If you are interested in participating in the Early Access Program, please contact a Pure Storage reseller. ®

* Effective capacity is, after all, system overhead and assumes 3:1 data reduction.

** We might imagine NetApp’s SolidFire unit could enhance its scale-out flash array offering to embrace unstructured data as well.

***CIFS is coming after the first release as is HDFS. That could mean they are a year away.