This article is more than 1 year old

Loved-up shared storage silos in SHOCK SPLIT!

Good news or bad news? Depends if you're a channel bod...

Blocks and Files A previously uniform shared storage architecture is splintering apart as server virtualisation, dedupe, flash and the cloud shatter the old order – giving us five steps to heaven, or hell, depending on your viewpoint.

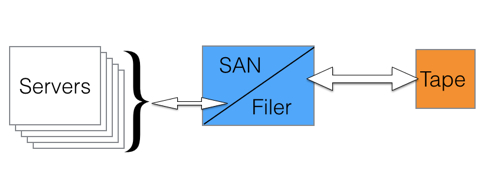

The old shared storage order was a bunch of servers linked across a network to a storage area network (SAN) or network-attached storage (NAS or filer) with old data backed up and archived to tape.

Networked storage - the starting state

Networking was Fibre Channel for blocks and TCP/IP over an Ethernet LAN or aWAN for files. Simple and straightforward. And then a far-reaching change came along.

a

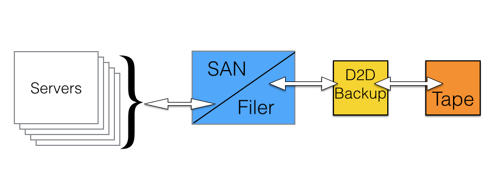

Storage step 2 - deduping backup to disk arrives.

The provision of deduplication made backup to disk practical, with a much lower effective cost/GB than before. This, paired with the attraction of fast disk access compared to slow tape access, has caused a significant decline in the use of tape as a backup medium. There has been turmoil in the tape automation supply market with format consolidation to LTO and DAT, a smaller number of suppliers and dreadfully difficult trading conditions leading to many loss-making quarters, and years, for suppliers like Overland Storage, Quantum and Tandberg.

The flip side of this has been a series of boom years for EMC with its Data Domain deduping backup-to-disk business.

While this was happening, server virtualisation was also on the rise, with EMC once again buying a company and driving the usage of its technology to a dominant position.

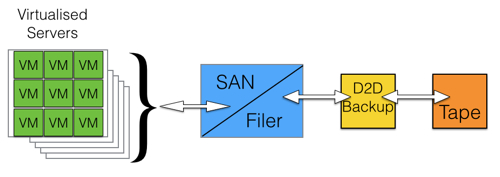

Step 3 - server virtualisation.

The effect of virtualised servers, with each virtual server needing its own access pipe to networked storage, was to point up the scalability limitations of networked storage.

Then ISCSI SANS came along providing block data access over Ethernet and they were positioned as cheap SANs.

SAN and NAS became unified and a separate scale-out approach was developed – in contrast to the traditional scale-up and then fork-lift upgrade when the scale-up limits were reached.

Scale-out meant a networked storage resource could support more servers/virtual servers, but their data access was slowed by network latency and disk data access latency. The answer to that problem was flash.

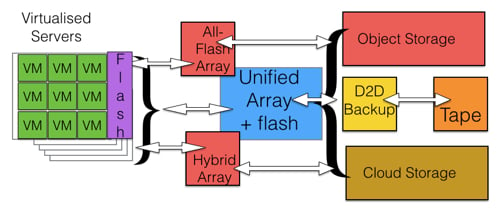

Step 4

Flash appeared inside arrays, with SSDs replacing some hard disk drives and also being used as a cache inside the array, front-ending the disks.

Startup vendors thought freshly designed array software could make better use of flash, extending its endurance by reducing the number if writes for example, and several kinds of flash array arrived:

- all-flash arrays from the likes of Texas Memory Systems and Violin Memory, followed by newer startups such as Pure Storage, Kaminario, Whiptail and Solidfire;

- hybrid flash/disk drive arrays combining flash speed with disk drive capacity from suppliers such as Nimble Storage, Tegile and Tintri; and

- all-flash versions of disk drive arrays, such as EMC's VNX, HDS's VSP, HP's 3PAR and Dell's Compellent also arrived on the scene.

Flash storage also became de rigeur in servers with direct-attach SSDs and PCIe flash cards, popularised enormously by Fusion-io.