This article is more than 1 year old

Calxeda hurls EnergyCore ARM at server chip Goliaths

Another David takes aim at Xeon, Opteron

Calxeda, formerly known as Smooth-Stone in reference to the river rock that the mythical David used in his sling to slay Goliath, doesn't think the server racket can wait for the 64-bit ARMv8 architecture (announced late last week) to be designed and tested in the next few years.

And that is why Calxeda has spent the past several years tweaking the 32-bit ARMv7 core to come up with its own system-on-chip (SoC) and related interconnect fabric suitable for hyperscale parallel and distributed computing where nodes have only modest memory needs.

Today, Calxeda takes the wraps off its much-anticipated ARM server processor, which has been given the name EnergyCore in reference to the fact that like other ARM chips used in smartphones and tablets, it is focused on doing computing work for the least amount of energy possible. The idea is that by switching to ARM cores, Calxeda can do a unit of computing work burning less juice than an x86 chip from Intel or Advanced Micro Devices, the Power chip from IBM, the Sparc T from Oracle, or the Itanium from Intel.

The EnergyCore ECX-1000 Series chips, as the first EnergyCores will be called, are based on the Cortex-A9 designs from ARM Holdings. The ECX-1000 chips are in fact based on a quad-core implementation of the Cortex-A9 chip, but like other server implementations of the ARM chips, such as the X-Gene announced last week by Applied Micro Circuits, there is a lot more to these chips than the core.

There is a slew of other stuff, including a fabric interconnect and a management controller that would otherwise be an add-on for the system board, on the chip. One big difference between the EnergyCore and X-Gene is that the latter is based on the 64-bit ARMv8 and won't ship until the second half of next year if all goes well at Applied Micro. And that will be early silicon. It remains to be seen when server makers will pick up the X-Gene chip and actually get it into servers, but that might take until 2013.

Calxeda thinks there's money to be made now, and for some workloads, the EnergyCore chips are going to fit the power bill. "ARM does for the processor world what Linux did for the operating system world," Karl Freund, vice president of marketing at Calxeda, tells El Reg. "It opens up the chip market to a whole lot of innovation."

The ECX-1000 chips are implemented in a 40 nanometer process and are manufactured by Taiwan Semiconductor Manufacturing Corp, which seems to be the foundry of choice for server chip makers that don't have their own wafer baking facilities. Each Cortex-A9 core runs at 1.1GHz or 1.4GHz and includes a scalar floating point unit that can do single-precision or double-precision operations as well as a NEON SIMD media processing unit that has 64-bit and 128-bit registers and that can also do floating point ops.

The EnergyCore implements ARM's TrustedZone security partitioning capabilities, just like other ARMv7 cores. Each core on the ESX-1000 chip has its own power domain, which means it can be turned on and off as needed or not to conserve power and reduce heat dissipation.

The Calxeda ECX-1000 ARM server chip

Each Cortex-A9 core in the ECX-1000 chip has 32KB of L1 data cache and 32KB of L1 instruction cache each, plus a 4MB L2 cache that is shared across those four cores. These cores have an eight-stage pipeline and can do out-of-order execution as is traditional for modern x86 and RISC processors.

Incidentally, the Cortex-A9 reference designs support from 16KB to 64KB of total L1 cache with up to 8MB of L2 cache spread across those cores and clock speeds as high as 2GHz. The cores process 32-bit instructions, but have a 64-bit data path to memory with another 8 bits for ECC data correction for a total of 72 bits. The ECX-1000 has a DDR3 memory controller that can support regular 1.5-volt DDR3 main memory or the 1.35-volt DDR3 low-power memory; 800MHz, 1.06GHz, and 1.33GHz memory speeds are supported.

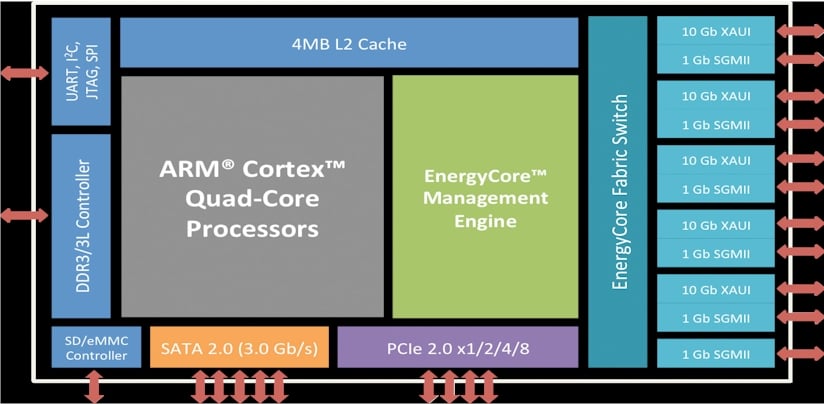

Here's a block diagram of the other goodies that are baked into the ECX-1000 ARM chips:

The ECX-1000 has four PCI-Express 2.0 controllers and one PCI-Express 1.0 controller and they can support up to two x8 lanes and four x1, x2, and x4 lanes. It also has an integrated Secure Digital/enhanced MultiMediaCard (SD/eMMC) controller on the chip. The chip also has a SATA 2.0 controller capable of supporting up to five 3Gb/sec ports; older 1.5Gb/sec SATA devices are compatible with this controller, but newer 6Gb/sec peripherals are not.

The ECX-1000 also sports what Calxeda calls the EnergyCore Management Engine, which is akin to a baseboard management controller (BMC) in a server motherboard to provide out-of-band management for the processors and peripherals in the system. According to Karl Freund, vice president of marketing at Calxeda, these on-board BMCs burn anywhere from one to four watts of juice and have an average manufacturing cost of $28 per node, so integrating this on the chip cuts down on both power consumption and costs for large-scale server clusters.

This integrated management controller on the chip manages secure booting for the ARM cores, supports the IMPI 2.0 and DCMI management protocols, does dynamic power management and power capping, and provides a remote console over the serial-over-LAN (hilariously abbreviated SoL) protocol.

This on-chip control freak has one other very important job: configuring and optimizing the bandwidth allocated between the ECX-1000 cores and the EnergyCore Fabric Switch that is also etched onto the chips. Yup, not only is the ECX-1000 a quad-core chip with integrated controllers, it has its own switches. Bitch.