This article is more than 1 year old

NextIO punts I/O virtualizing Maestro

Put 'em in the rack and lash those servers into submission

NextIO, a maker of server I/O virtualization switches based on PCI-Express technologies, has announced the third and probably the most significant of its products. It's called vNET I/O Maestro and is being peddled as a server I/O virtualization appliance that can take the place of Ethernet and Fibre Channel switches at the top of server racks.

NextIO has already been selling a GPU coprocessor consolidation device called vCORE, which allows either four (vCORE Express) or 16 (vCORE) Nvidia GPUs to be plugged into a single chassis and linked to servers through the PCI-Express switch to create hybrid CPU-GPU supercomputers. The company also sells an appliance called the vSTOR S200, which put 16 flash drives from Fusion-io into the PCI-Express bays of the chassis and allowed them to be linked through the PCI-Express 2.0 switch to anywhere from one to eight servers inside a rack. Thanks to the nControl I/O Resource Manager inside the switch, the links between PCI-Express GPU coprocessors or flash drives is virtualized and can be changed on the fly.

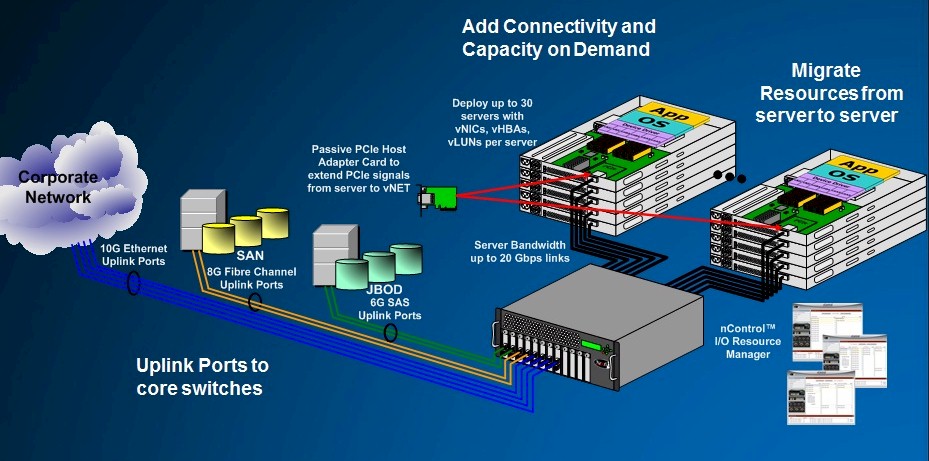

NextIO's vNET I/O Maestro switch

With the vNET Maestro, instead of plugging in flash drives or GPU coprocessors, the core NextIO PCI-Express switch can be configured with third-party Ethernet or Fibre Channel controllers and converted into a converged storage switch. NextIO is supporting a dual-port 10GE controller and a dual-port 8Gb/sec Fibre Channel controller in the I/O modules in the Maestro switch. The eight I/O modules come out of the front of the Maestro switch as uplinks to Ethernet and Fibre Channel switches. Out of the backend of the Maestro switch are single 10Gb/sec PCI-Express links, which attach to servers through a passthru card that plugs into a PCI-Express 1.0 slot. The device is completely passive.

And here's the magic part: The Maestro switch presents the operating system on the server with the hardware running in the Maestro switch's I/O modules and installs the driver as if that Ethernet or Fibre Channel card was in a local PCI-Express port. It doesn't know it isn’t local, in fact. And the Maestro switch then multiplexes Ethernet and Fibre Channel traffic over that single PCI-Express wire from up to 30 servers and directs it to the appropriate device in the I/O modules and into the core Ethernet network and Fibre Channel devices that servers need to link to.

As more capacity is needed for any particular server, the nControl management software can redirect I/O requests to additional modules until that 10Gb/sec passthru card is saturated. If you need more I/O for a single server, you can add multiple passthru cards. The nControl software can keep hundreds of different server profiles around so that, as workloads change on your clusters of machines, you can match the I/O needs on servers and do so on the fly.

Here's what the Maestro topology looks like:

So where is PCI-Express 2.0 support, which brings 20Gb/sec capability, and what about PCI-Express 3.0, which will double it up again to 40Gb/sec? KC Murphy, NextIO co-founder and CEO, tells El Reg that options for PCI-Express Gen 2 are coming, but that the company was "waiting for PCI-Express 3.0 to sort itself out a bit" because "the water is a little too deep there yet". Murphy says that for most workloads in the data center, a 10Gb/sec link is sufficient.

Most customers are advised to put two Maestro switches in a rack of servers for redundancy. The resulting server racks use fewer adapter cards, run them at higher utilization, and have less cabling and take up less space than using a mix of top-of-rack Fibre Channel and Ethernet switches.

To give a sense of how much better the Maestro setup is compared to a mix of Fibre Channel and Ethernet switches, NextIO cooked up a scenario where a hypothetical customer wanted to virtualize 30 ProLiant DL360 servers using VMware's ESXi hypervisor. Each server has a single NextIO passthru card that has eight Gigabit Ethernet and four Fibre Channel links, which are virtualized by nControl and are actually physically provided by four dual-port 10GE Ethernet cards and two dual-port 8Gb/sec Fibre Channel cards parked in the front of the Maestro chassis.

The servers don't need extra Ethernet or Fibre channel adapters so you can buy these 1U boxes instead of fatter machines. So when you add the cost of the servers and the Maestro PCI-Express switch together, it costs $303,062, with about a third coming from the Maestro switches and 60 PCI cables.

To do this in a more traditional manner, you would need six 48-port Gigabit Ethernet switches with 10GE uplinks and six 24-port Fibre Channel switches with 8Gb/sec uplinks. For redundancy, you'd also need 60 Ethernet NICs and 60 4Gb/sec host bus adapters. And because you need that I/O, you need to get fatter servers (4U boxes instead of 1U boxes, in this case a ProLiant DL580 G7), which cost a little more money for the same performance.

This stack costs $534,600, with about a fifth coming from switches, a third coming from adapters and cables, and half coming from the servers.

"We can show a CapEx savings of 40 per cent, and then all of the operational savings – on the order of 60 per cent – are money in your pocket," says Murphy. ®