This article is more than 1 year old

SGI gets its HPC mojo back with CPU-GPU hybrids

Sticking it to racks and blades

SC10 If Silicon Graphics was still a standalone company and had not been eaten by hyperscale server maker Rackable Systems, there is a fairly good chance that the innovative "Project Mojo" hybrid CPU-GPU clusters, which pack a petaflops into a single cabinet, would not have seen the light of day.

And because two innovative server companies merged and come at HPC from two different angles, the Prism XL ceepie-geepies announced today at the SC10 supercomputing conference in New Orleans are coming to market precisely timed to the mainstreaming of GPUs in the HPC market.

SGI has been eagerly selling its "UltraViolet" Altix UV 1000 shared memory supercomputers, based on Intel's Xeon 7500 processors and SGI's home-grown NUMAlink 5 high-speed interconnect. And when it reported its financial results for the third quarter, it was bragging that it had sold a cumulative 63 Altix UV machines to date. While the company has not provided any sales projections for the Prism XL machines, it is safe to bet that the uptake for these hybrid, dense-pack machines will be at least as good if not better than for the Altix UV 1000s. It's horses for courses.

The high-end of the current Altix UV 1000 line, which previewed at the SC09 show last year, scales to 256 sockets, 2,048 cores, and 16 TB in a single global memory space with 18.56 teraflops behind it. This machine is heavy on the shared memory and light on the floating point operations in its four racks, and because shared memory is technically difficult to do, the Altix UV 1000s will command a premium.

The Prism XL machines - the XL is not short for "extra large," but rather "accelerator" - are not quite as dense as we were lead to believe when SGI announced Project Mojo back in June, promising to pack a petaflops into a rack by the end of this year. Yes, that sounds impossible, and yes, it is.

As El Reg anticipated, SGI was talking about single-precision floating point performance when it was talking about petaflops, and it was also talking about a custom cabinet that spans multiple rack enclosures. That single-precision petaflops is actually packed into two of SGI's new Destination 42U racks, with a third rack in the middle just for InfiniBand (for node interconnect) and Gigabit Ethernet (for node management) networking switches. You get to petaflops with a total of 504 of Advanced Micro Devices' FireStream 9350 single-wide fanless GPU co-processors, which were announced at the end of June.

The FireStream 9350s have killer single-precision number crunching at 2 teraflops per GPU, but only come in at 400 gigaflops at double precision. If you do the math, you don't need a supercomputer to see that this works out to only 201.6 teraflops DP across those 504 GPUs in the Prism XL cabinet.

Picking at it like that takes nothing away from the impressive density and packaging that the Prism XL machines offer compared to alternatives. SGI was intentionally vague to build excitement (as if all petaflops were created equal). This is a dubious strategy perhaps but certainly not a rare one in the IT racket.

Stick it to 'em

As El Reg explained in its exclusive in September about the Project Mojo designs, the Prism XL is employing a CPU-GPU packaging technique that SGI calls sticks.

Rather than starting with an idea for an enclosure - say a 2U rack or cookie sheet server - and then trying to see how many GPUs can be crammed into an x64 server before it catches fire, SGI's engineers working on the Prism XL machines started with the fanless GPU co-processors from AMD and Nvidia and the highly cored integer processors from Tilera (widely believed to be a funky MIPS variant) and the PCI-Express bus that they link into, and then architected a server to wrap around these co-processors. And so SGI created deep and skinny server chassis that puts the GPUs in the back, a modest x64 server in the middle with its memory and disks, and networking and PCI-Express slots power supplies in the front for easy access.

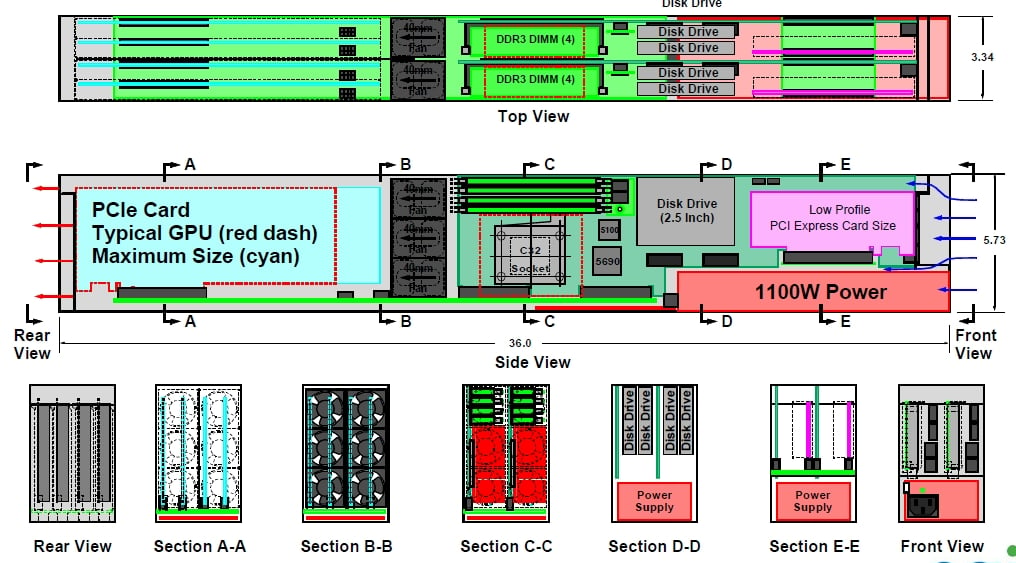

This 5.75 inch wide by 3.34 inch high by 37 inch chassis is what is called a stick. Here's the schematic drawing of what a stick looks like from the side and below:

You tip this unit on its side after wrapping it in metal, so the power supply is on the left side and the mobo and GPUs are laying horizontally.

According to Bill Mannel, vice president of product marketing at SGI, the server maker is using a reference motherboard for blade servers based on the Opteron 4100 processor from AMD for the x64 compute portion of the Project Mojo stick. This blade server uses AMD's 5690/5100 chipset and has four DDR3 memory slots running at 1.33 GHz; it has two Gigabit Ethernet ports and one Fast 10/100 Mbit port for management. The mobo also has an HTX slot that will eventually allow two adjacent boards in a stick to be configured as a two-socket, eight memory slot SMP server.