This article is more than 1 year old

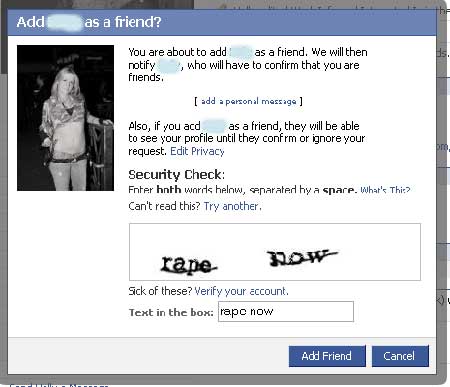

Facebook takes the Captcha rap

Stop the word verification madness

From time to time we capture word verification silliness for posterity. It's been a while, but we've got another for you, this time from Facebook - and this time it ain't so silly.

The text reads: "rape now". It was displayed next to the picture of a female work colleague our tipster (a Reg reader, of course) was adding to his list of Facebook friends?

While Facebook calls such scripts "security checks," the rest of the world knows them as captchas, short for completely automated public Turing Test to tell computers and humans apart. The point is to display an image that's not easily recognizable to a computer to ensure a real person is on the other end of a transaction.

The offending captcha was generated by ReCaptcha, a project sponsored by Carnegie Mellon University researchers working on a novel way of digitizing old books. Optical character recognition can stumble on as much as 30 per cent of the words it encounters. ReCaptcha displays them to users of Facebook and other sites. When they type in the corresponding text, the mystery is solved.

"Although there is very heavy filtering against offensive CAPTCHAs (we have over 1000 words in our list of offensive terms), in very rare cases, inappropriate words can be shown," Luis von Ahn, a professor working on the project explained in an email. "We work very hard to keep such words out of the system, but in general it is impossible to guarantee that nothing offensive will ever be displayed."

He said ReCaptcha displays about 30 million images every day and generally gets fewer than one complaint each month.

We knew there was something strange about the words being displayed on Facebook's security check. While cycling through we got combinations that included "mutton synagogue," Toledo playmates" and "wiped president."

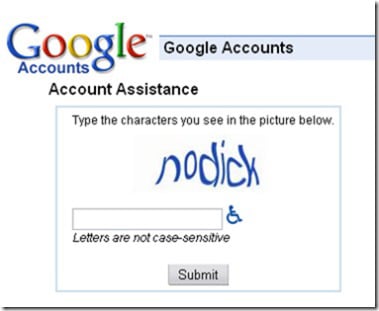

Von Ahn says this isn't the first cockup to affect a captcha, and pointed us to several examples, two of which we include below.

We see his point. ®